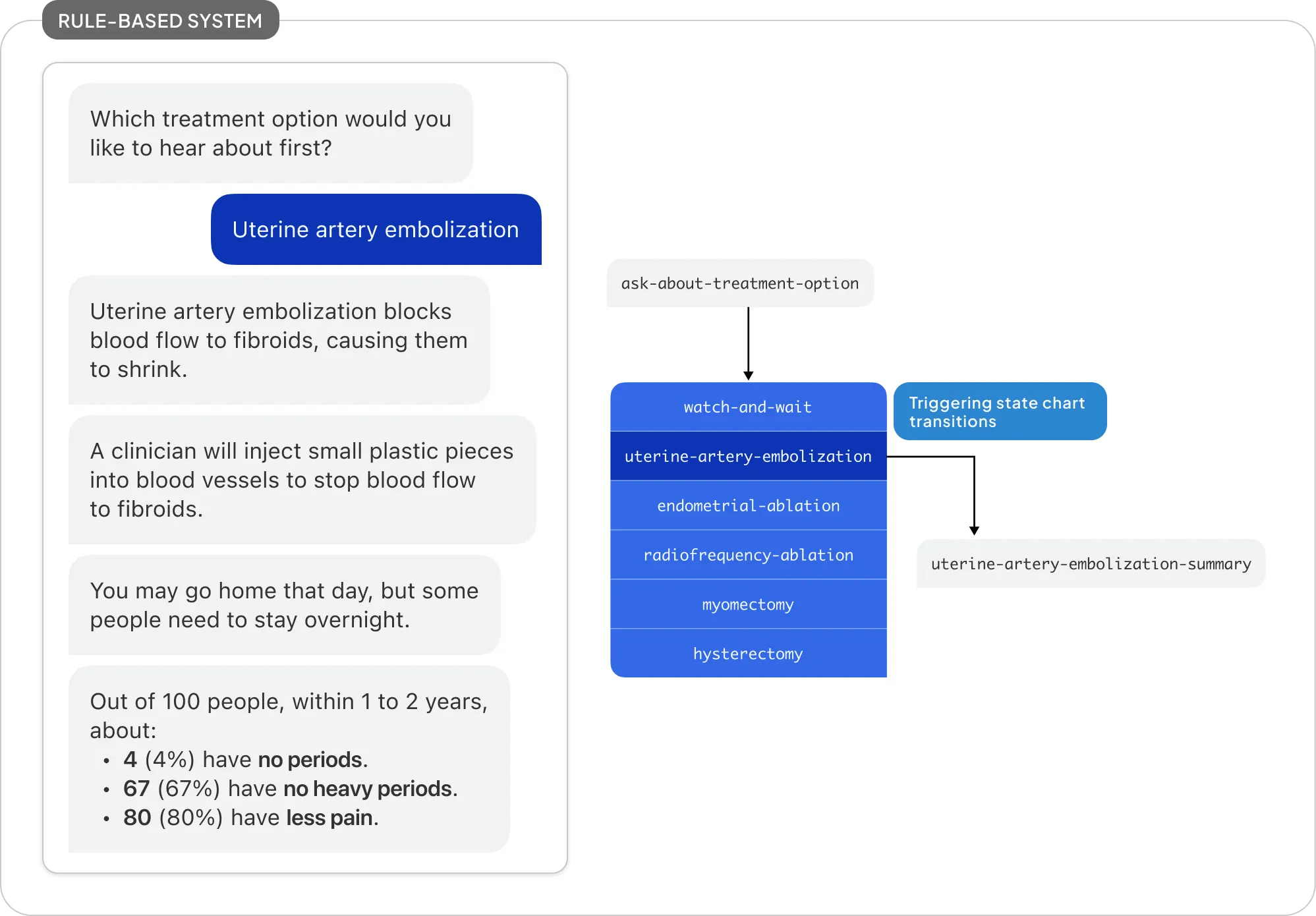

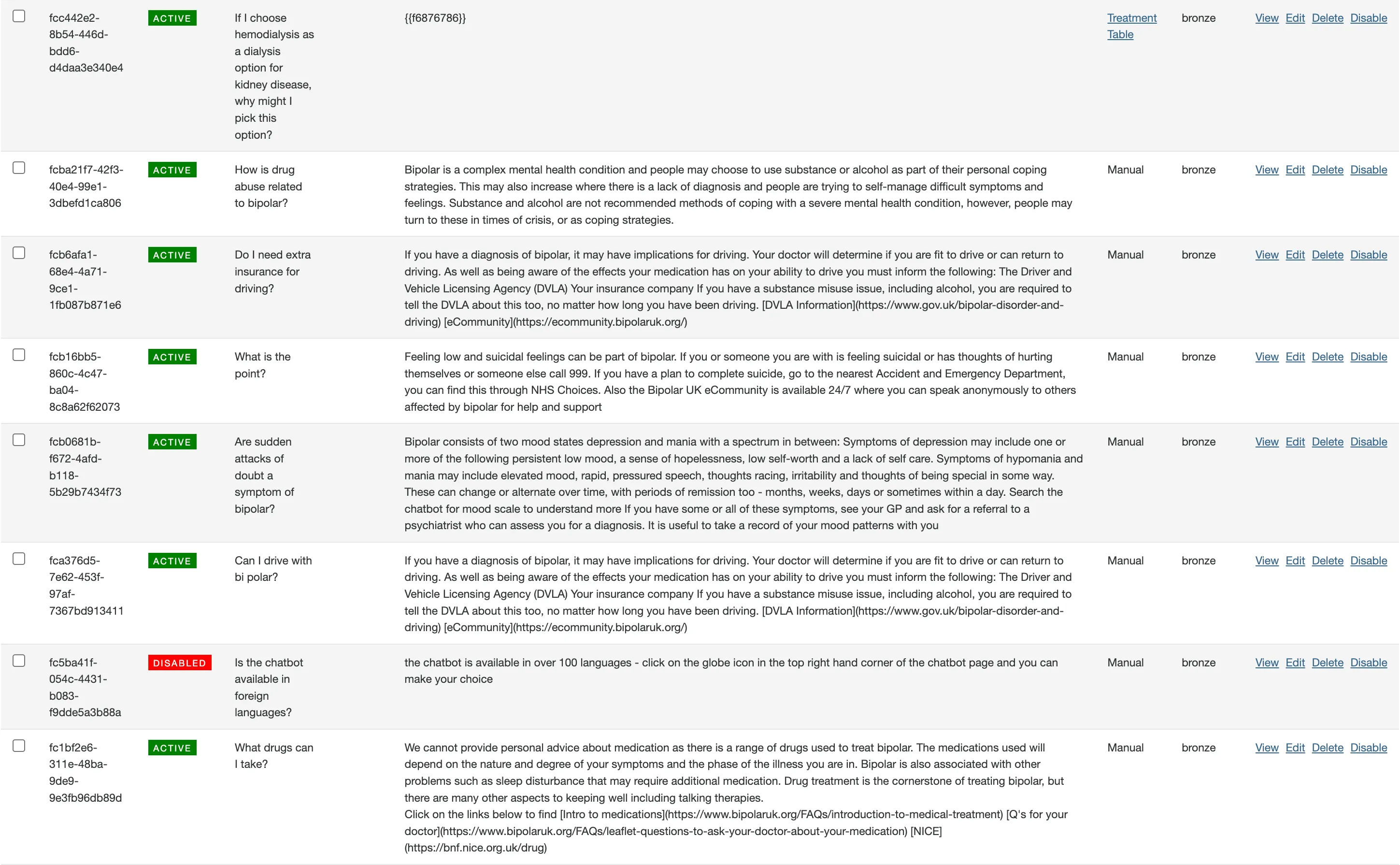

Fora Health is an app that supports shared decision-making between patients and their healthcare practitioners. It was first designed as a rule-based chatbot, back in the days before large language models and their APIs were widely available. With the advent of LLMs, we saw an opportunity to enhance the user experience by integrating LLM functionality into Fora Health’s existing featureset.

Of course, being situated in a healthcare context, we had to be careful about how LLMs were integrated. Our goal from the beginning was to be transparent about LLM usage, and to ensure that they complemented our rule-based chat framework rather than replacing it. Fora Health was envisioned a reliable, trustworthy tool, and maintaining a limited set of chat paths was essential in giving us the control and predictability to work with patients and their providers.

The question then became: how do we integrate LLMs, which are notorious for the exact opposite of predictability, into our user experience in a way that doesn’t compromise the safety of our regular, deterministic chatbot?

Ask Fora Health

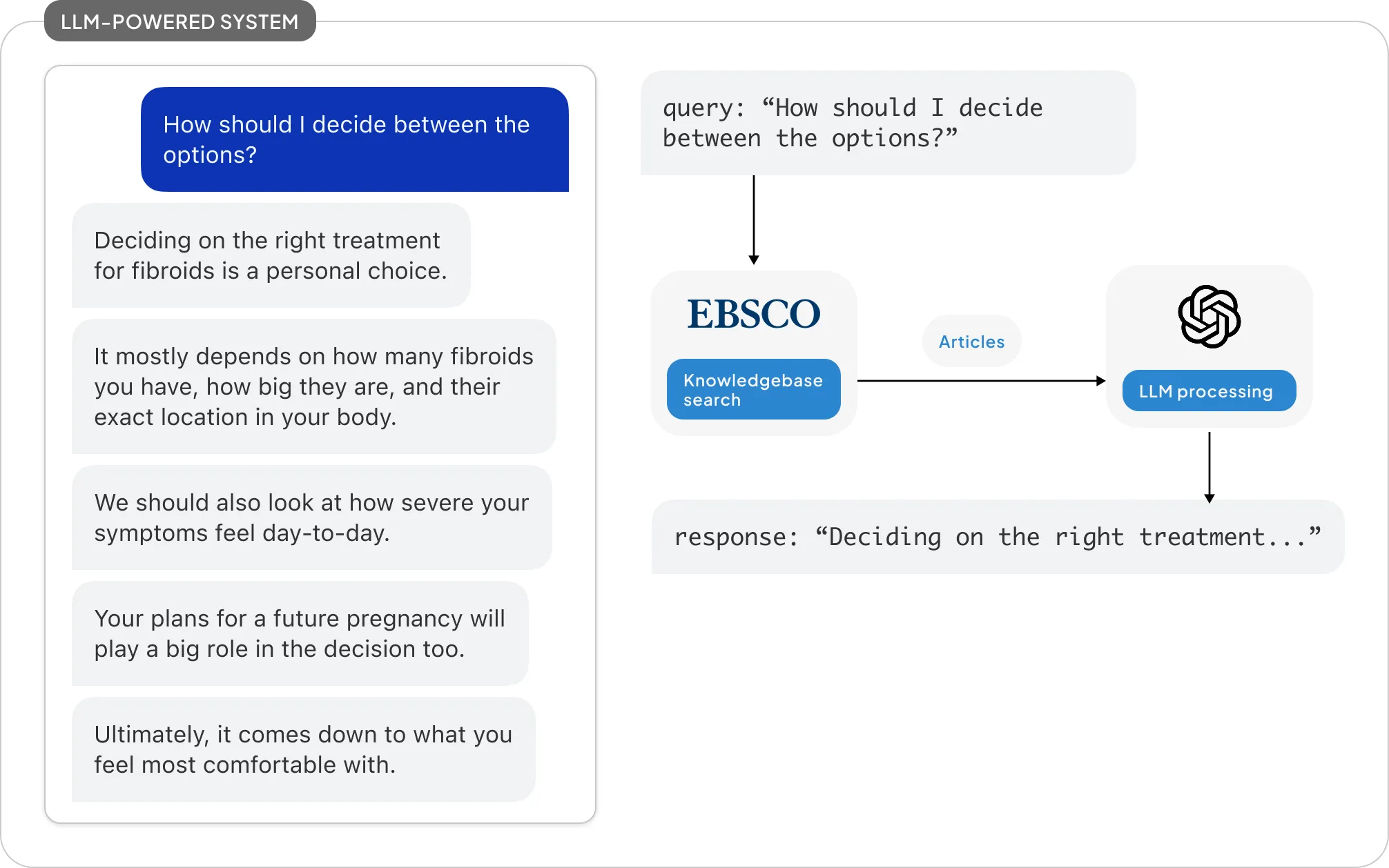

Our product strategists wanted LLMs to act as a layer between patients and the resources provided by our content partners like EBSCO and Bipolar UK. These resources, though extremely comprehensive in their scope and depth, are often too dense and inaccessible for the average user, let alone someone who may already be experience significant distress and cognitive load.

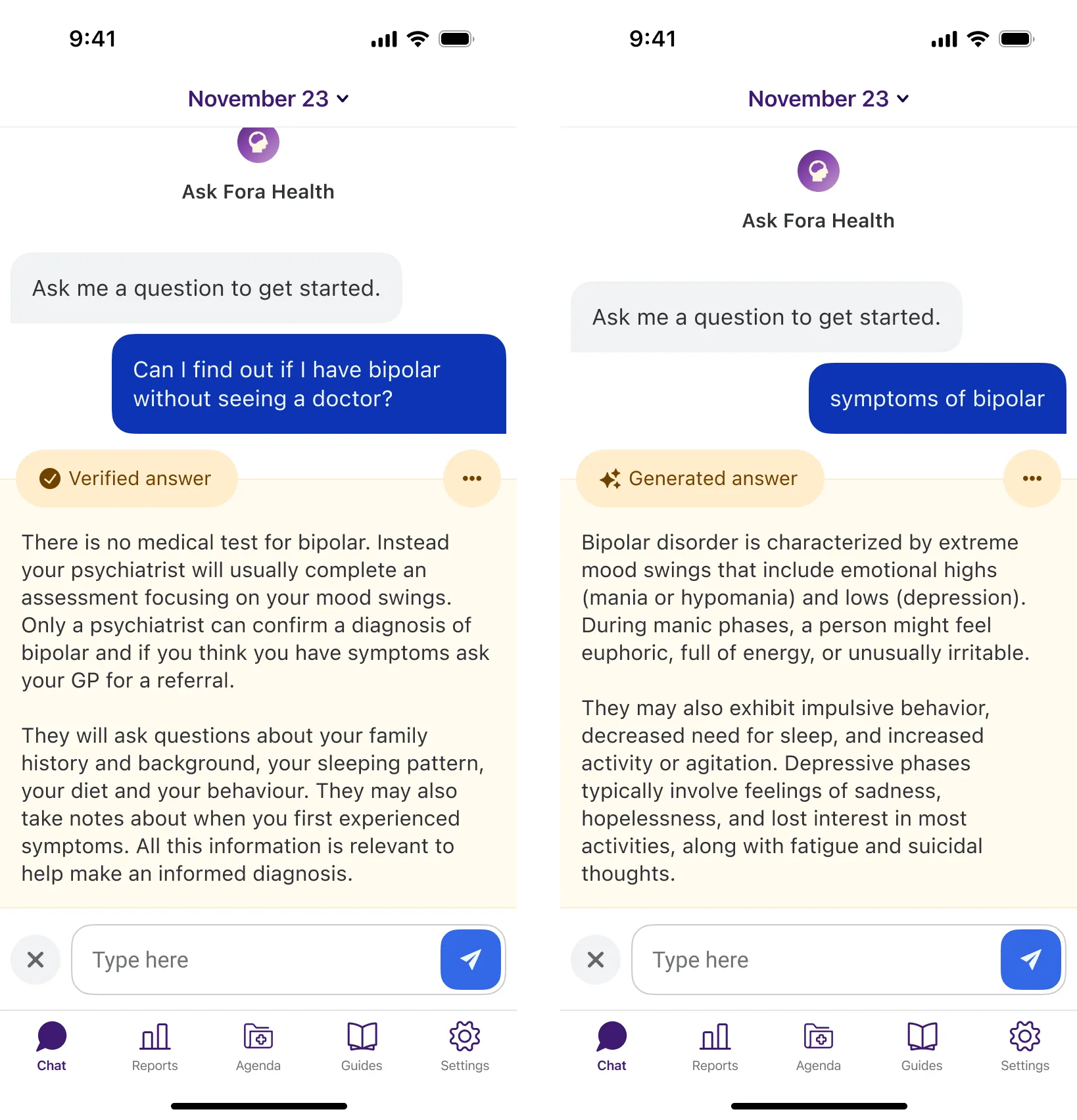

At Fora Health, we use the conversational interface as an accessible, natural way to frame all sorts of healthcare management features like symptom tracking and medication adherence.

A typical chat interaction with Fora Health’s usual rule-based chatbot. The user interacts with a predetermined dialogue tree, with fixed inputs and ouputs

It felt natural to use this same interface for helping users understand the resources available to them. We envisioned a question-and-answer system (“Ask Fora Health”) that uses the LLM to distill complex information from various knowledgebases into simple, helpful answers for any questions the user might have.

From a technical standpoint, we used retrieval-augmented generation, which allowed LLMs to use actual, verified content from our partners to generate simple, accessible answers to user queries. This approach ensured that the LLMs were grounded in accurate information, while still providing the flexibility and natural language capabilities that make them so powerful.

My goal, on the other hand, was to design the user-facing experience in a way that stayed true to Fora Health’s core reliability and trustworthiness.

What We Did Not Do

A helpful way to understand how we went about this design problem is to consider what we actively decided not to do. A straightforward approach would simply be to reuse our existing UI with minimal tweaks. After all, does it really matter how a chatbot’s messages are generated, as long as the end result is useful to the user?

A theoretical chat design using our pre-existing UI to frame a LLM-powered question-answer interaction.

I’d argue that in our specific context, it did.

Fora Health’s vanilla chatbot interface is rule-based for a reason: safety and predicability was our primary concern, especially when dealing with sensitive healthcare topics. This pattern of interaction establishes a mental model in users that creates trust and a sense of security.

While LLMs are powerful tools, they are also notorious for their unpredictability and potential for generating incorrect or misleading information. RAG mitigates a portion of that risk by only using validated source material, but it was not risk-free.

In a rule-based system, bot messages are pre-written responses to pre-written user inputs, and are deterministic in nature. Users can feel confident that the information they receive is vetted and reliable:

On the other hand, Ask Fora Health’s LLM responses are generated on-the-fly, based on free-text user inputs. This means the bot’s responses are stochastic in nature, and cannot be fully predicted or controlled.

Using the same conversational interface to deliver content that isn’t 100% reliable was clearly not the right approach. At best, it erodes the trust we built in the rest of the app when LLM responses fall short, and at worst, we could be seen as hijacking the existing trust to ingratiate the LLM to users.

This was something we were against doing on principle, even if the LLM’s responses turned out fairly benign in reality.

Transparency and Agency

It was important that we designed this feature to be transparent about the risk behind the technology powering it, and allow users to maintain agency over their interactions with the LLM. This meant being honest and clear about LLM usage whenever it happens, instead of leaving it as a hidden aspect of the experience.

However, we also did not want preconceived notions of LLMs to dissuade users from trying out the feature altogether. After all, the RAG-based approach did come up with useful and accurate answers during our testing, and was specifically tuned and equipped for our use case. Our user research also suggested that it was a valuable tool for patients to learn more about their conditions.

Our design thus had to strike a careful balance between being open about LLM usage, but also convincing users that it was, in a way, “not like the others”.

What We Came Up With

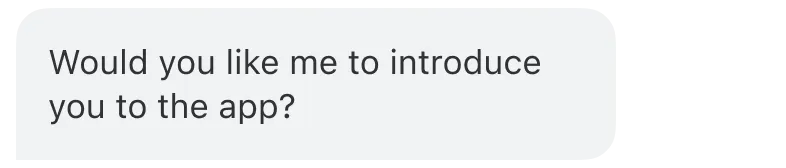

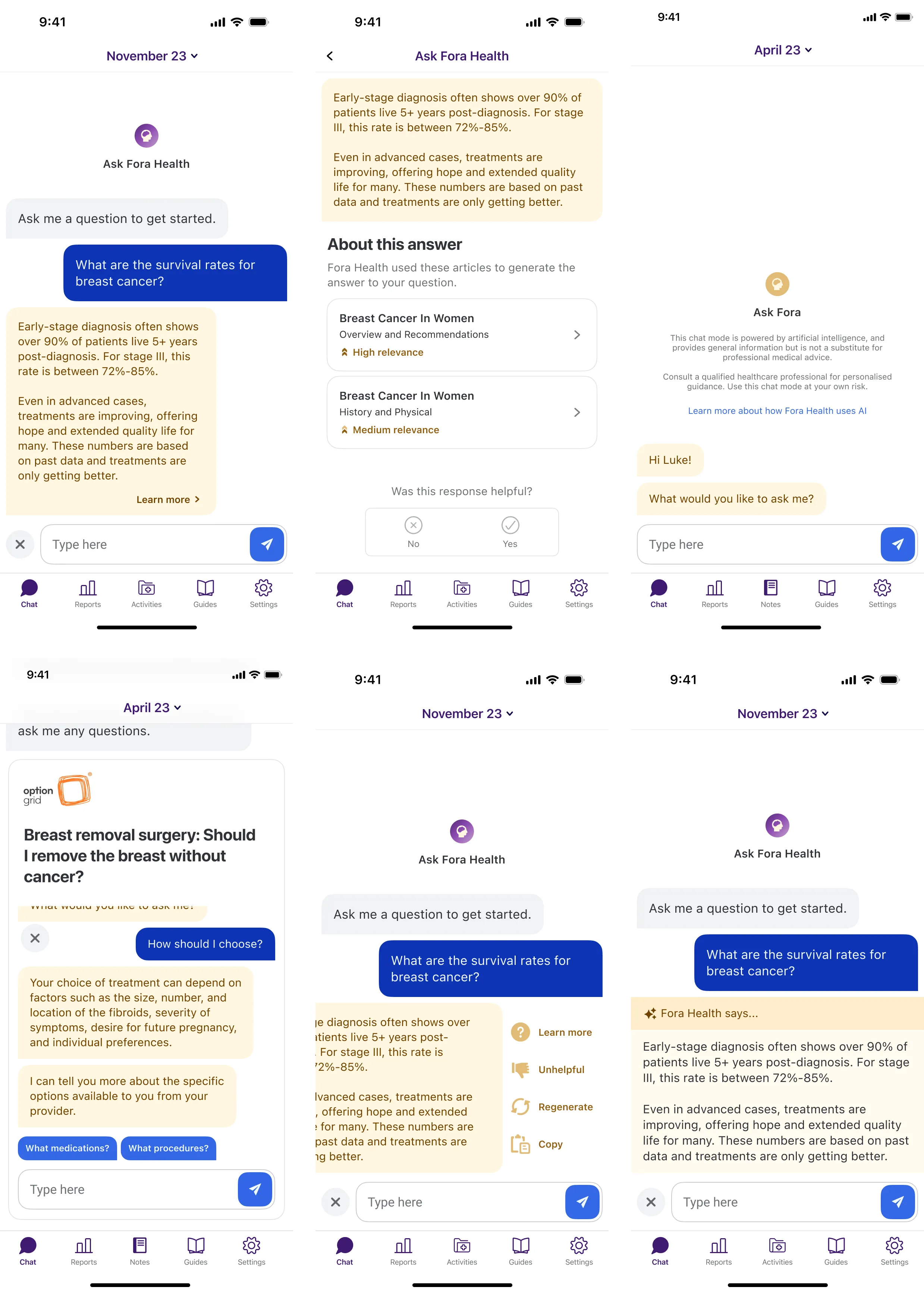

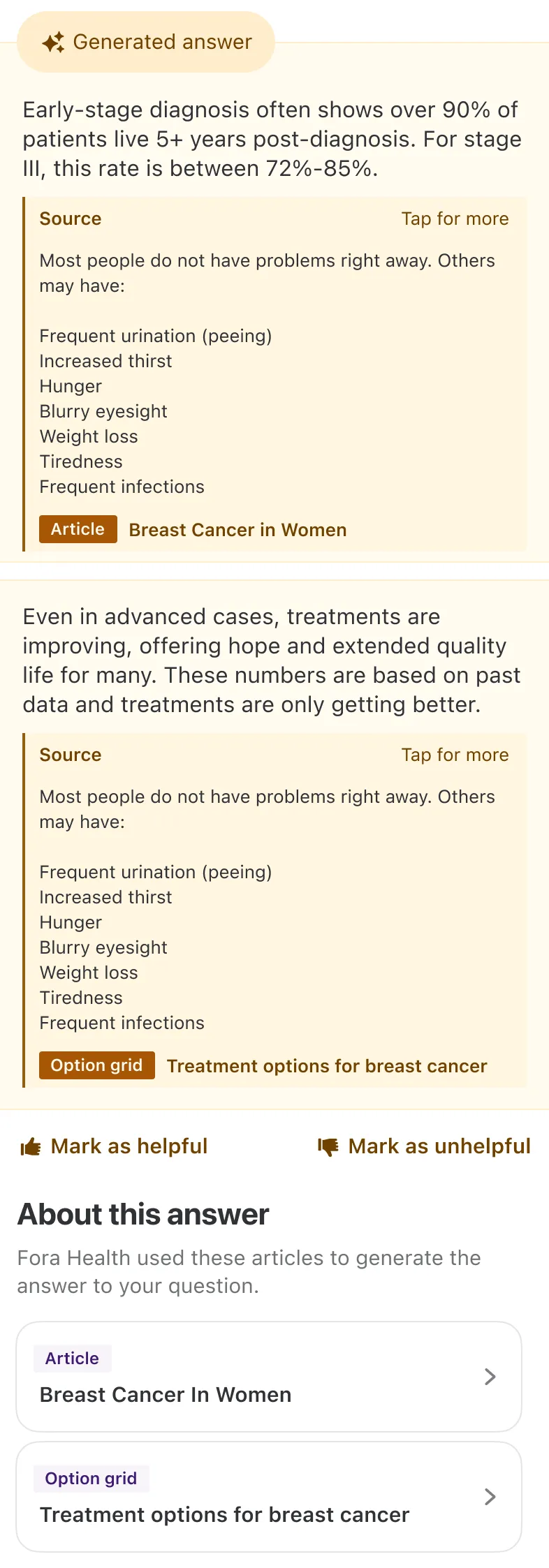

Our final design for a typical interaction loop with Ask Fora Health looks something like this:

We found this balance by keeping Ask Fora Health within the chat interface, but creating a distinct visual separation from the rest of the user experience.

Comparing this to our earlier theoretical design, we can see several key differences:

- Colour scheme: Normal chatbot messages use a neutral gray as they are the most commonplace piece of UI. In contrast, Ask Fora Health uses a yellow-brown colour palette from the tertiary color in our design system. We would eventually adopt this colour scheme for any LLM-powered feature in our app to signal this change in context.

- No bubbles: We dropped the chat bubble motif entirely for Ask Fora Health messages. This further distances the LLM-powered feature from the rest of the chatbot experience, and also echoes the look of a existing LLM chatbot interfaces.

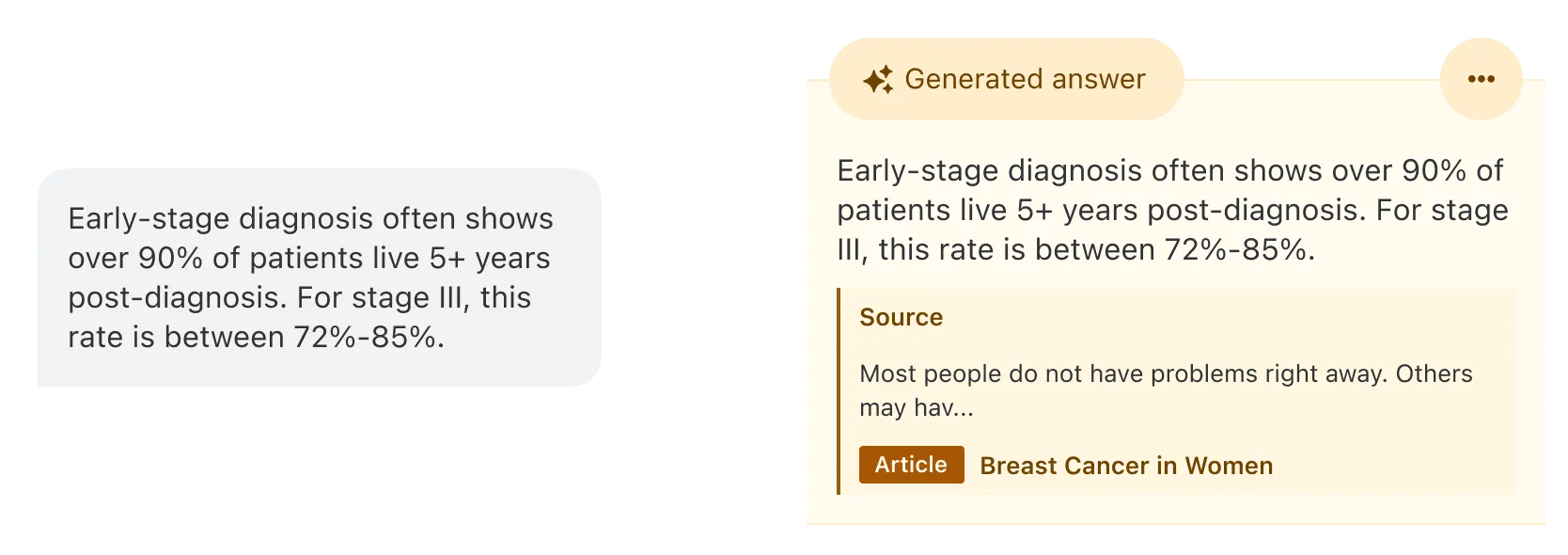

- Labelled responses: Our implementation for Fora Health would create different tiers of answers: “Verified” responses were pre-generated answers to common questions that had been human-verified. “Generated” responses were created on-the-fly by the LLM using RAG. These labels communicated the nature of the answer to users, helping them understand the reliability of the information they are receiving.

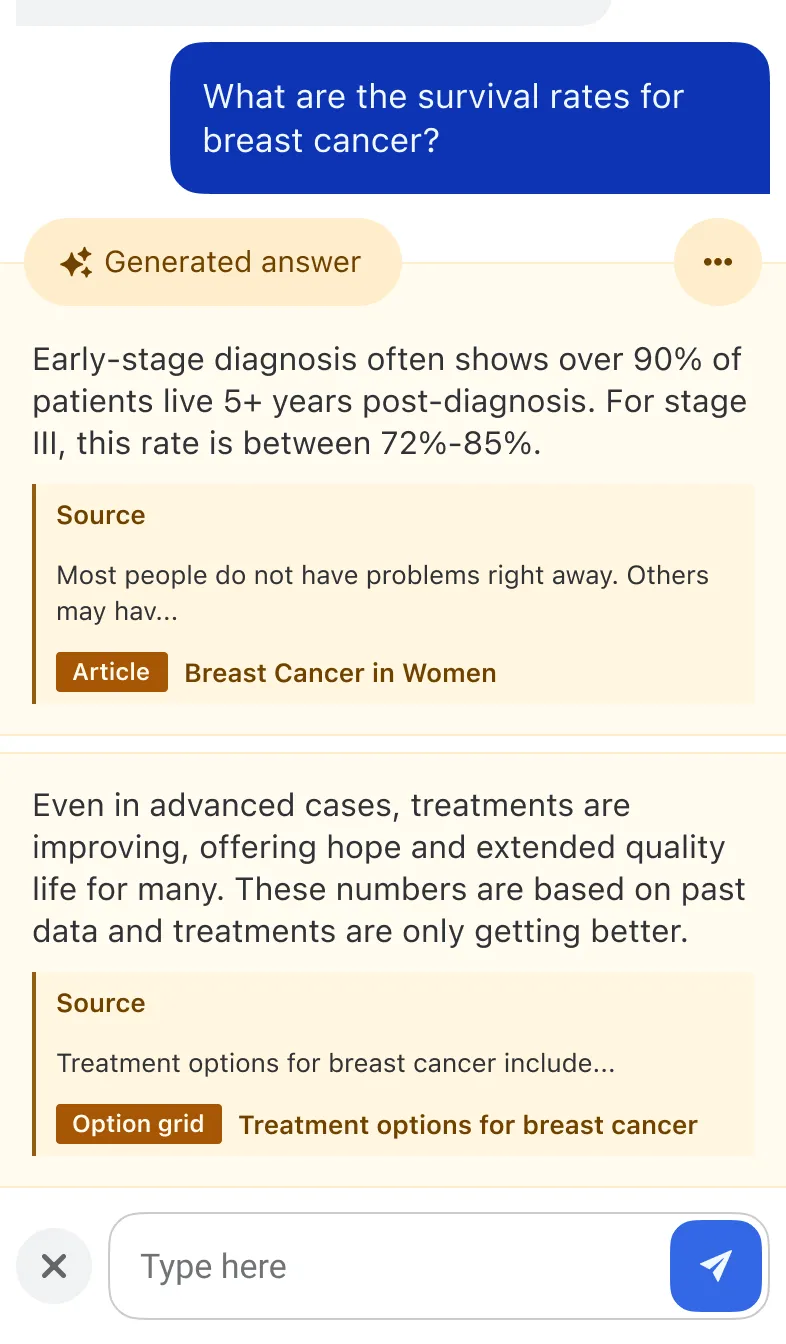

- Sources: Another impressive feat by our backend engineer was the ability to include source citations for LLM-generated answers. This not only adds credibility to the responses, but also allows users to further explore the original content if they wish.

- More button: Finally, the “More button” indicates that there is additional information available beyond the text response. This allows any users curious about the provenance of the answer to dig deeper if they wish, without overwhelming those who just want a quick response.

It goes without saying that this wasn’t all designed in one go. We iterated on this design multiple times, mulling over what to present first and what to hide behind additional interactions, all while keeping user trust and safety at the forefront of our decisions.

We wanted to be transparent and honest, but we also didn’t want to overwhelm users with information. After all, too much information can be just as detrimental as too little.

We found a balance in the final design that achieved the goals we set out to accomplish: being open about our use of LLMs in this feature, and taking the opportunity to build additional trust, rather than eroding it.

Building trust

Creating a distinct visual feel for Ask Fora Health solved one side of the balance: being open about LLM usage. There was still the other side of the scale: convincing users that it was “not like the other” AI chatbots. I briefly touched on this with the addition of UI elements like labels and sources, but I think it’s worth going deeper into how we achieved this, and why it was important.

What made a Ask Fora Health conversation different from say, a more general-purpose chatbot like ChatGPT or Gemini, was that it was uniquely tuned to the Fora Health context. A question from the user didn’t directly go into the answer that they received; instead, it was first used to retrieve factual information from our trusted content partners, going through a contextual re-ranking process before generating the final response.

graph TD

subgraph query[" "]

D@{shape: circ, label: "User question"}

E(Generate embedding)

D --> E --Search in--> V

end

subgraph response[" "]

F[Chunk re-ranking]

K@{shape: doc, label: "User's Fora Health data"}

G[Response generation]

J@{shape: dbl-circ, label: "Final answer"}

V --Top 100 chunks--> F

K --Added context--> F

F --Top 5 chunks--> G

G --> J

end

subgraph chunking["Preprocessing"]

A@{shape: docs, label: "Content from partners"}

B(Processing and chunking)

C(Generate embeddings)

end

subgraph database[" "]

V@{shape: cyl, label: "Vector database"}

end

A --> B --> C --Stored in--> V

style query fill:none,stroke:none

style response fill:none,stroke:none

style database fill:none,stroke:noneSuffice to say that there was a lot of effort put into making Ask Fora Health different. The question was, how do we communicate this effectively to users? Imagine simply dropping that flowchart from above into the app and expecting users to understand, let alone trust how Ask Fora Health worked.

Instead, we adopted a show, don’t tell approach by taking advantage of the extra goodies our technical implementation provided us.

Labelling responses

One of the advantages of being a fairly targeted LLM implementation was that we knew exactly the user context we were working with, and what information was most relevant to them. Combined with our previous research insights during the exploration phase, this gave us a set of common questions, particularly around bipolar disorder, which was our key focus at the time.

This gave us a unique opportunity to pre-generate answers to these questions, and have them verified by human experts. These answers would be labelled as “Verified” in the chat interface, informing users that there was an extra layer of reliability behind them.

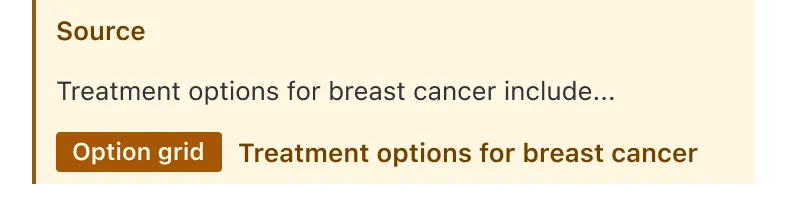

Sources

A side effect of retrieving relevant content chunks for answer generation was that we could also link these chunks back to the original sources they came from. This meant that for any generated answer that used RAG, we could provide citations for users to verify the information themselves.

We had explored different ways of surfacing this information, eventually settling on showing inline truncated quotes, while a “More” button expanded the answer into the full sources and rating buttons.

Deployment

After several rounds of iteration and refinement, Ask Fora Health has been deployed as a feature and available for organisations working with Fora Health, including a partnership between King’s College London and the South London and Maudsley NHS Foundation Trust in the UK.

This journey towards making a “dumb chatbot smarter” was a careful exercise in balancing transparency, trust and usability. I think we managed to strike that balance well, and it also gave us a chance to solidify our approach towards LLM integration moving forward – committing to open and honest LLM usage, while aiming to replace hesistancy with curiosity and trust.